The Great Inference Pivot: Why the “Sovereign AI” Winner Won’t be a Chip Maker

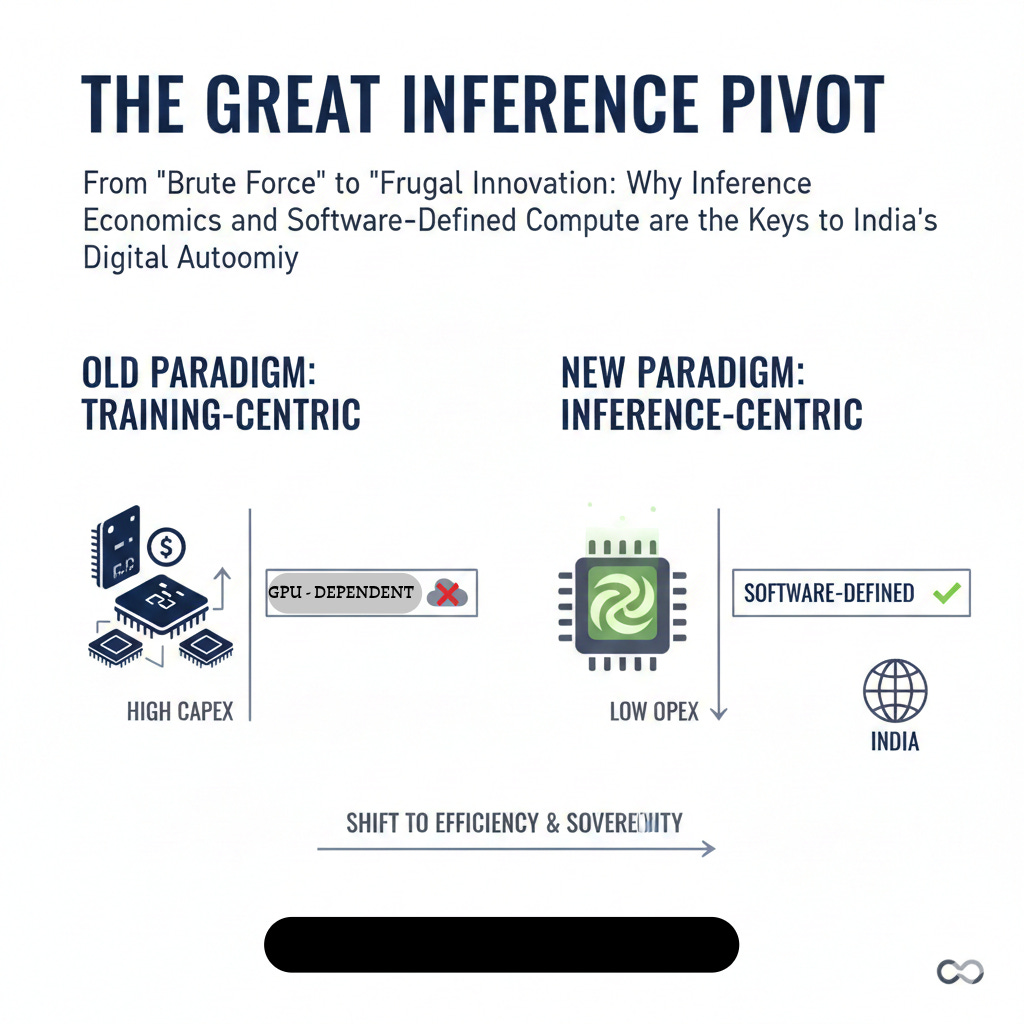

From "Brute Force" to "Frugal Innovation": Why Inference Economics and Software-Defined Compute are the Real Keys to India’s Digital Autonomy.

In the gold rush of the early 2020s, the world was obsessed with the “Training Race.” The headlines were dominated by who had the most H100s, the biggest clusters, and the most massive datasets.

But as we move through 2026, the vanity metrics of training are fading. We are entering the era of Inference Economics.

As an evangelist at the intersection of FinOps, Sustainability, and Infrastructure, I’ve spent the last year analyzing the “Great Split” in the AI stack. If you are a CXO, a policymaker, or a tech strategist, your 2026 ROI isn’t hidden in how you train your models—it’s hidden in how you deploy them.

🧠 The Definition: Training vs. Inference

To understand the strategy, we must first define the stages of the AI lifecycle:

Training (The Education Phase): This is the massive, one-time capital expenditure (CapEx). It’s where a model “learns” from data. It requires thousands of high-end GPUs, months of time, and enough electricity to power a small city.

Inference (The Working Phase): This is the ongoing operational expenditure (OpEx). Every time a user asks a chatbot a question or a system analyzes a sensor feed, that is inference. By mid-2026, inference will account for nearly 70% of all AI compute spend.

📉 The Big Bet: Software-Defined Compute

The “GPU Tax” is real. Relying on high-end, foreign-sourced silicon for every simple AI task is like using a rocket ship to go to the grocery store. It’s overkill, it’s expensive, and it creates a dangerous supply-chain dependency.

The “Big Bet” for 2026 isn’t more hardware—it’s better math.

We are seeing a shift toward Software-Defined Compute, where clever mathematical runtimes allow “commodity hardware” (the CPUs already in your data centers) to perform like specialized AI chips.

The Landscape: A Quick Comparison

The Hardware Optimizers (e.g., Intel OpenVINO): Great for performance, but they often lock you into a specific vendor’s silicon.

The Compression Camp (e.g., Neural Magic): They speed things up by pruning models, but sometimes at the cost of “quantization errors” (accuracy loss) that regulated industries can’t afford.

The Frugal Innovators (e.g., Ziroh.com - Kompact AI): This is where the magic is happening. By using their ICAN runtime, they deliver high-precision (BF16) inference directly on standard CPUs.

🇮🇳 India’s Sovereign AI Strategy

Sovereign AI is often misunderstood as simply “keeping data on-soil.” While that is critical (especially under the DPDP Act), true sovereignty requires Infrastructure Autonomy.

India’s strength has always been Frugal Innovation. While other nations build “Brute Force” AI, India is pioneering “Efficient AI.”

By leveraging products like Kompact AI, Indian enterprises can:

Break the Silicon Bottleneck: No more waiting for GPU shipments. If you have a server rack, you have an AI cluster.

Achieve FinOps Excellence: Why spend millions on new CapEx when your existing infrastructure can run a Llama 4 or DeepSeek model at production speeds?

Prioritize Green AI: CPU-first inference can reduce energy draw by up to 70%. In 2026, sustainability isn’t a “nice-to-have”; it’s a competitive requirement.

🚀 Key Takeaways for Leaders

If you are drafting your AI roadmap for the rest of the year, here is what you need to remember:

📍 Inference is the P&L Killer: If your inference strategy isn’t optimized, your AI projects will bleed cash.

📍 Decouple from Hardware: Look for “Hardware-Agnostic” solutions. Your software should be smarter than your silicon.

📍 Sovereignty = Efficiency: You aren’t truly sovereign if you are tethered to a foreign cloud or a foreign chip manufacturer.

The Bottom Line:

The winner of the AI race won’t be the one with the most GPUs. It will be the one who can deliver the most “Intelligence per Watt” and “Intelligence per Dollar.”

India is uniquely positioned to lead this “Efficiency Era.” We aren’t just catching up; we are rewriting the rules of the game.

What is your organization’s “Inference Strategy”? Are you scaling with more hardware, or smarter math?

If you enjoyed this deep dive, subscribe for more insights on FinOps, Sovereign AI, and the future of Green Computing.